Why You Need Multi-Armed Bandit Testing To Market Your Game

The gaming industry is ever-evolving. Although there are best practices and templates for game design, creating a game that will be a hit with players still has considerable challenges. Why? Because it’s not easy to pre-determine how players will respond to the game.

Games have an infinite number of variations and design choices, and developers need to choose which content to promote to achieve improved user metrics. There is no right option for all gamers since people have their own preferences.

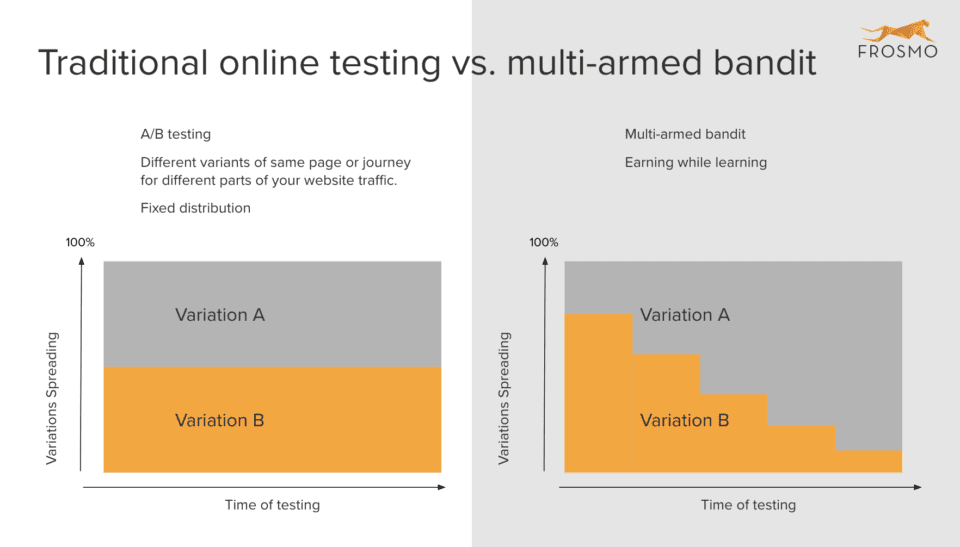

So, how do platforms like Netflix and Steam customize their promotions to users? They use multi-armed bandit (MAB) testing that allows them to systematically allocate more traffic to the best performing variations while allocating less to underperforming ones. Unlike A/B testing that goes through two distinct periods of exploration and exploitation, the bandit test is adaptive. It runs these two periods simultaneously to maximize the performance of your primary test metric across all the variations.

Before we explore how multi-armed bandit testing works, let’s look at why A/B testing might not work best for you.

The problem of testing promotions in games

The main problem of using A/B testing to determine the best performing variations of game marketing content is that it does not maximize revenue for the developer. A/B tests take longer to reach statistical significance, and during this time, a majority share of your traffic is directed to poor converting variants.

Multi-armed bandit testing is a type of testing that uses machine learning to favor better performing variations of particular sets. For the purposes of marketing, these are ad sets. It is named after an experiment in which a gambler must choose between different slot machines with varying prizes in order to maximize the amount of money they receive. They could determine payout chances by slowly using every machine and noting down the data (A/B testing), or by focusing on just a few machines while collecting data and maximizing their use of those slots for a higher return (MAB testing).

MAB testing produces faster results since there is no need to wait for a single winning variation. They terminate the exploration phase much earlier than A/B tests because they require a much smaller sample size to determine the optimal group. They then dynamically reallocate traffic to whichever variation that’s currently performing best while poorly performing variants increasingly receive less traffic. It’s all about giving the audience what they want, when they want it.

This helps developers gain as much value as possible from the leading variants during the testing lifecycle, thus avoiding the opportunity cost of promoting sub-optimal experiences to gamers. Marketing experts like to call this earning while learning. You need to learn (exploration) to figure out what works for your game and what doesn’t, but to earn (exploitation), you have to implement what you have learned.

Also, MAB testing doesn’t require constant supervision and modifications like A/B testing. Multi-armed bandit algorithms are adaptive and can continuously go about their job without the need for further input or adjustments. It also supports a lot of variations and will eventually converge on the best variants as it collects more data.

Still trying to figure out what MAB testing is all about? Check out the casino example in our blog here on Growth Marketing Genie: What is Multi-Armed Bandit Testing, and How Can It Help Your Brand?

Figure 1: Illustration of A/B vs. MAB testing. Source: FROSMO

Testing the best variant possible

A/B testing often offers less optimal variants as the best variants. When running such tests, one option frequently appears to be performing better, and developers decide or are forced to decide to end the experiment early and move everyone to the best-performing variation before they get significant results. Unfortunately, this often leads to choosing a variant that would be reversed once more data is gathered.

MAB testing, on the other hand, switches between learning how different variants perform to using optimal variants for the best performance. It is machine learning that constantly tests and optimizes for the best response. This relieves the pressure of ending a test early and settling on the poor-performing variant as the best variant, as is the case with A/B testing.

There isn’t one best result

Anyone who has performed an A/B test to compare similar aspects of a promotional event would have noticed that the results aren’t always binary. Although when planning a test, it’s normal for players to gravitate towards one of the variants, the reality is that the difference in conversion for two or more variants is usually minimal, even less than 5 percent. Sometimes, more than one variant will get great results.

This is especially true for the gaming industry as there is such a large player base with different preferences for variations that cancel each other in an A/B testing instance. This is exemplified best in a mobile game scenario where iOS and Android users have different preferences for game offers/events. To ensure players like the game and keep playing it, it’s valuable to use analytics to change the game parameters dynamically to suit the users’ preferences and improve the overall experience.

Game analytics involves the collection and analysis of user data to help developers discover and understand patterns of interactions within the game. The analysis could be performed by a game marketing agency to resolve issues, develop additional content, or boost revenues by encouraging in-app purchases. Game monetization is just as important as the game itself. With competition in the gaming industry getting stiffer every day, it’s vital to find ways to make games that appeal to the target users, minimize frustrations, and maintain the interest of gamers as long as possible.

Analytics define a player’s profile by the time they spend playing the game, game progression, spending habits, and interaction with other players. This data allows developers to know the trends and features that will help them convert non-paying users into paying customers, as well as what design changes to implement to maximize retention rate.

A/B testing assumes that there is only one best offer to show the players regardless of their playing and spending habits. But the truth of the matter is that there could be two great variations, and it’d be unreasonable to expect the developer to select just one. This is where MAB works well, as it never has to choose between two variants. MAB tries to make the best decision for each individual audience member rather than treating the group the same way. It can do this based on player attributes, including past gameplay, interests, country, session count, and any other data your game has recorded about its players.

Games aren’t stagnant

These days, most games have constant updates, new releases and characters, and more. They are no longer released in full, and this means that the marketing that worked when you released it won’t work in the future if you are using A/B testing: the funnel is no longer optimal, and you’ve reached statistical significance.

Externally, the game industry is also evolving with new titles competing for the same audience, the quality of the players is fluctuating and user acquisition (UA) sources are changing.

With MAB, the algorithm has not been set to find the absolute best variation in an optimization test. This differs significantly from what happens in an A/B test where when statistical significance is achieved, the developer can choose the variant with the best outcome for online game marketing. But this doesn’t mean A/B testing is obsolete. Read more about that here.

While optimizing game events or offers with MAB, some traffic is directed to check if non-optimal variants are performing and will allocate more relevance to them when they start performing better. This comes back to machine learning, where there is constant testing, growing, and learning what works for different audience groups. This process eliminates regret since variants are tested systematically to identify those that work well for the different audience groups being targeted by the developer.

Want to learn more about digital marketing?

Digital marketing is a vast industry, and you need a strong understanding to master it. That’s why we’ve compiled all of our best resources to create the ultimate guide to digital marketing. Check it out here: Everything You Need to Know About Video Game Digital Marketing.

Your game needs to be optimized to perform. While A/B testing gives you assured results (i.e. “this is the promotion that works best”), it only gives you results that perform well on a lower scale rather than slightly lower performance on a wider scale (which will bring in more players and revenue).

Due to the ever-changing nature of the gaming industry and gamers, MAB testing is better as it has decades of research and algorithms that ensure you are constantly optimizing, creating different variants for different customers, and ensuring that the right choice is used for each stage of the buyer’s journey.

Do you need help setting up your game’s multi-armed bandit testing to determine winning variations much faster? Game Marketing Genie is here to help. We are a game marketing agency that specializes in creating actionable, measurable and customized game marketing strategies that meet the needs of your audience.

Need more info? Let’s chat today about how we can unlock your game’s potential.